LEARNING JOURNEY

The class visited the Another World is Possible exhibition at the ArtScience Museum, which explores how artists and designers imagine possible futures. Personally whenever I think about the future, I tend to imagine dystopian worlds where systems dominated by corporate power and technological control, very much like the settings in Divergent, The Maze Runner or Ultraviolet. These films paint the future as a cautionary tale, warning us about the loss of individuality and freedom. However, this exhibition offers an alternate, hopeful vision, exhibiting works that are fuelled with hope, creativity and resilience.

The exhibition as a whole was a strong example of visual storytelling. Each section is a chapter of a larger narrative, inviting visitors to question whether speculative fiction can truly predict the future or it is merely a reflection of our hopes and fears. On a deeper level, I learnt that these works are not only about imagining what is ahead but also about re-examining what is happening now. Many of the stories addressed real-world issues such as inequality, marginalisation and identity, using speculative imagination as a tool for social reflection and empathy.

One section that stood out to me was the introduction of Afrofuturism. It is a cultural movement that reclaims the future through the lens of the African diaspora, placing Black experiences, histories and identities at the centre of speculative thought. In a world where Black voices are often overlooked, Afrofuturism becomes a powerful narrative of empowerment, freedom and possibility. I really adore this section as it reframes marginalisation not as a limitation but as a site of strength and creativity. It also made me question the social structures currently. Why must marginalised groups still have to fight for visibility? And what does it say about our collective failure to create an inclusive future?

Another work that fascinated me was BARC by Interactive Materials Lab. It transformed an everyday object like a barcode scanner into an interactive weapon used in a gamified installation. The task-based gameplay consists of missions being printed on receipts, making the game both tactile and engaging. It reminded me of how everyday technologies can be reimagined into experiential storytelling tools. However, I could not help but reflect on the environmental implications of constantly printing receipts. It made me think about how artists sometimes prioritise concepts over sustainability and how balancing both could create more responsible design outcomes.

Another artwork Cloud Scripts by Ong Kian Peng captured my attention with its poetic integration of technology and spirituality. Using an AxiDraw machine, it generated a series of talismans, which is a fascinating intersection between the mechanical and the metaphysical. I found it conceptually rich as it suggests how technology can be a mediator between the physical world and the unseen spiritual world, a theme that resonates with my own exploration of tangible human experiences and emotions.

There were a number of artworks that were displayed in video format. I think that it is the most effective for speculative storytelling. While photography can freeze a moment, video allows emotion, sound and time to intertwine. Videos communicate complexity and narrative depth. It reminded me of my background in film and made me wonder if incorporating moving images might enhance the emotional storytelling aspect of my own project.

Overall, this museum visit was a turning point for me. Since my project already carries speculative elements, Another World is Possible gave me valuable perspectives on how design can envision alternative realies while still being grounded in social commentary. It made me reflect on key questions I now need to confront:

① What kind of narrative do I want my project to tell?

② What emotions do I want people to feel when they encounter it?

③ What speculative artefacts can I create to provoke empathy, curiosity and/or reflection?

Right now, I feel both inspired and overwhelmed. But I think that is the beauty of this process. The uncertainty pushes me to think more deeply about what kind of future I want to imagine through design.

EXPERIMENT 005

I was inspired by the talismans in Cloud Scripts, exhibited in Another World is Possible. Each talisman functions as a symbolic form of communication between the human and spiritual realms. What intrigued me most was not only tier visual beauty but how these symbols seemed to hold meaning. Each mark was interpretive, not decorative. It led me to wonder — If Ong Kian Peng’s talismans are communicative bridges between human and spirit, could mine become emotional bridges between human and machine?

In this experiment, I wanted to explore how generative art could transform emotions into symbolic forms. How might such symbols function as a new visual language for emotions?

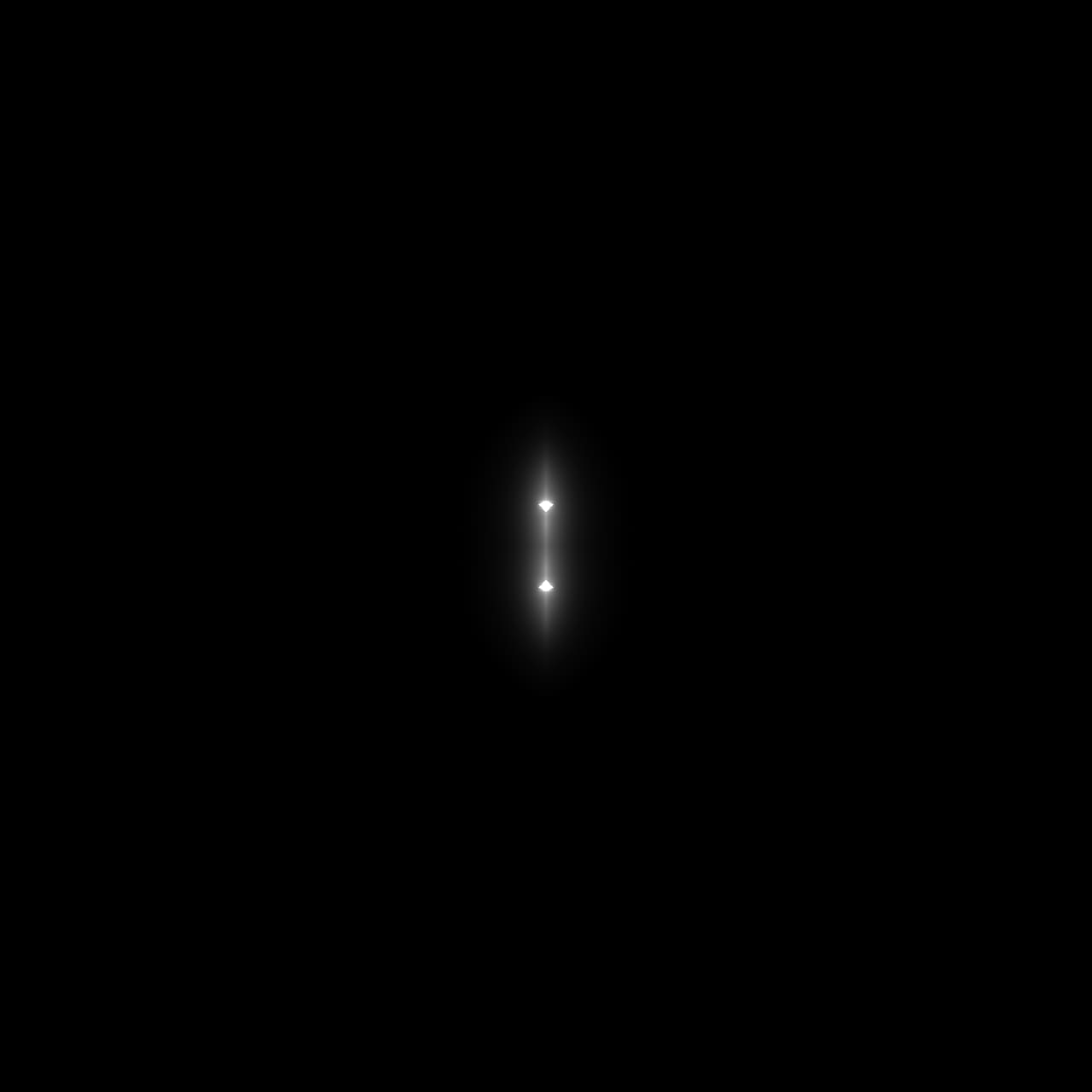

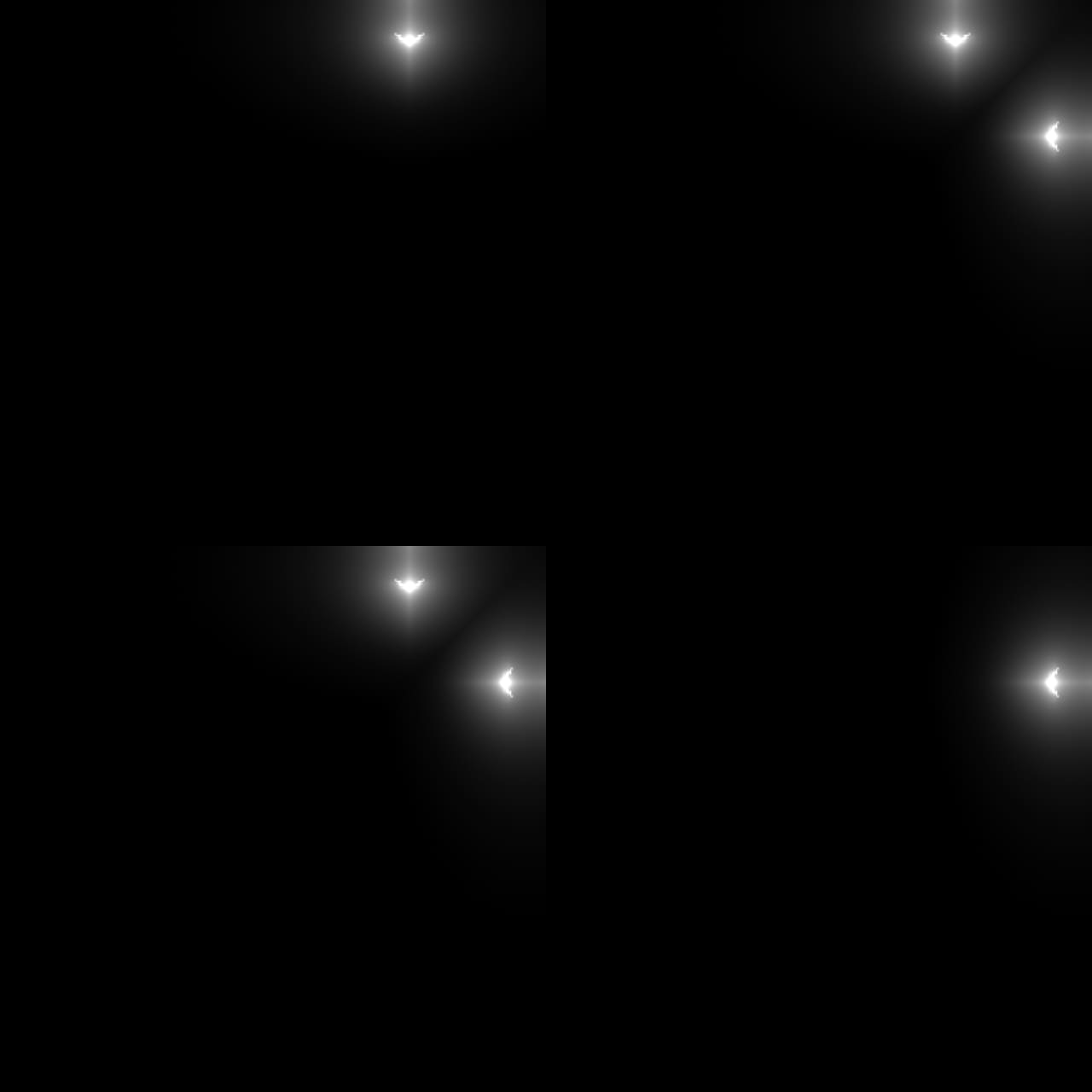

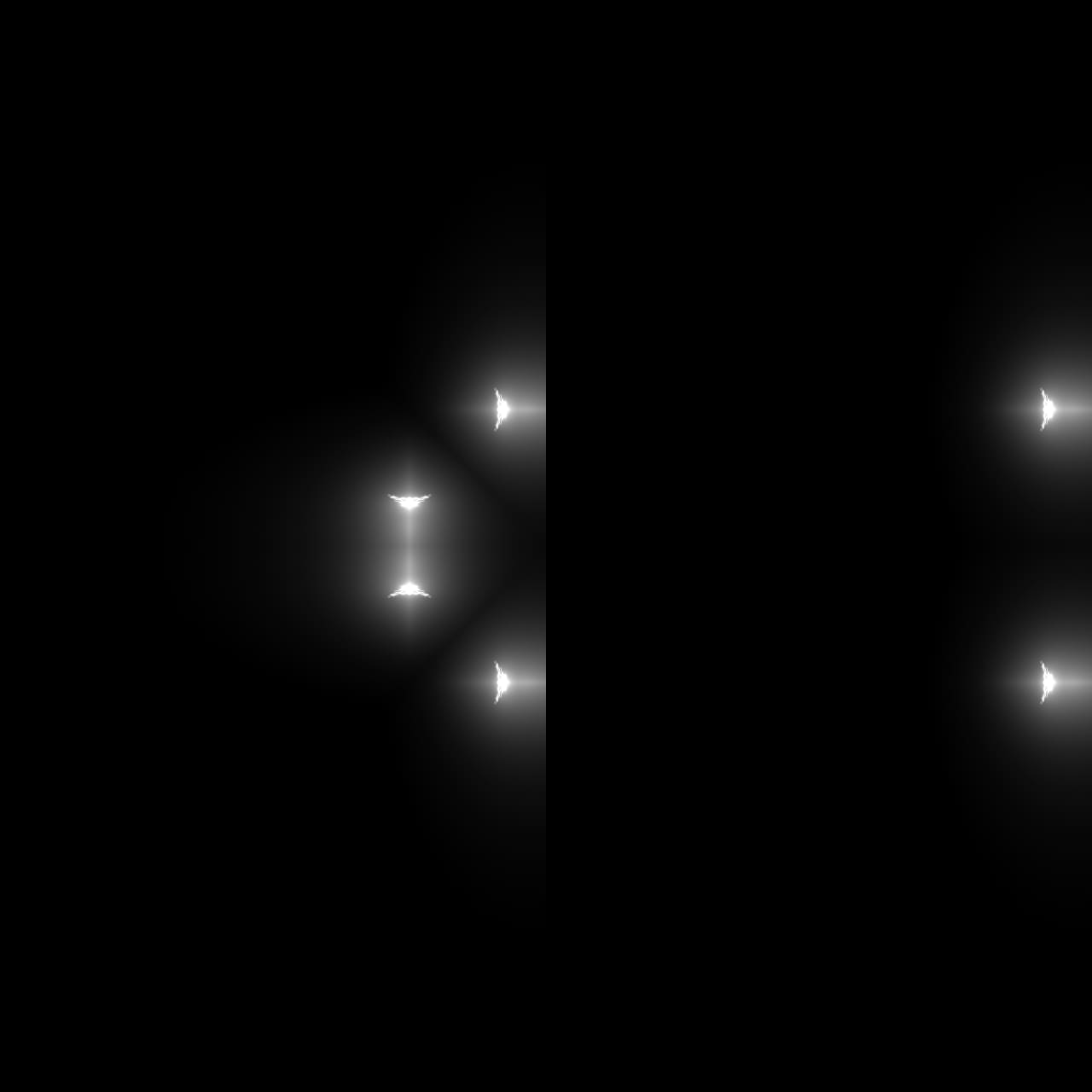

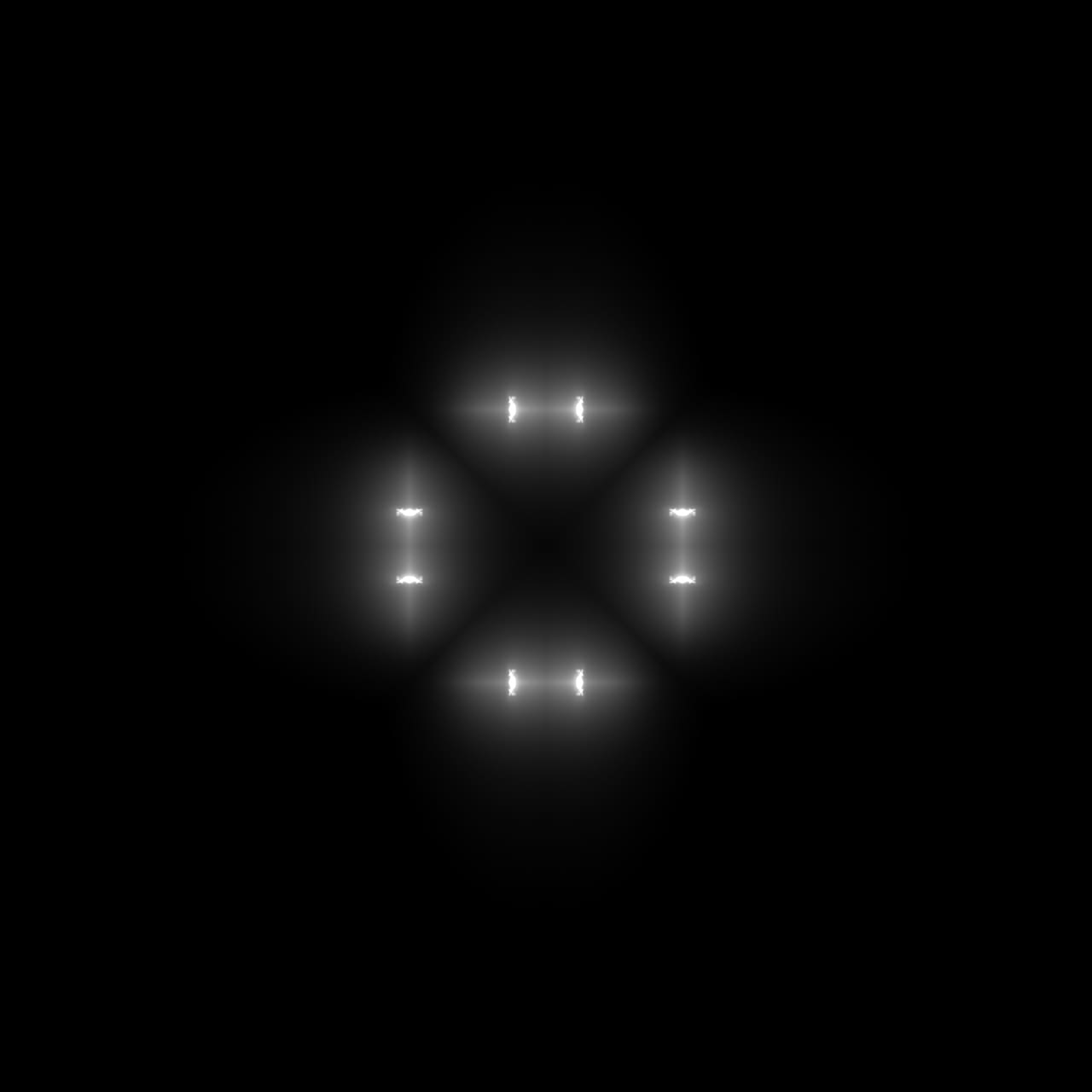

![]()

Currently, we rely on static symbols such as emoticons and emojis to express emotions in digital communication. Each of these symbols carries fixed, predefined meanings that reduce complex feelings into simple pictograms. Therefore, I wanted to imagine an alternative system where emotional symbols are not fixed but adaptive. In a speculative way of saying: What if emotions could design their own symbols?

For visual research, I looked into how sound vibrations at different frequencies generate unique patterns, such as Chladni figures. These examples show how variations in invisible inputs can produce visually distinct outcomes; a concept that parallels how different emotional intensities could generate unique symbolic forms. Inspired by this, I began experimenting with creating dynamic mirrored patterns in TouchDesigner, producing kaleidoscopic effects that shift and evolve in response to data.

Every time a new mirror effect is added at a different angle, the visual complexity increases. By applying four mirror operations, I produced a four-sided symmetrical pattern, resembling a symbolic mandala. To connect emotion with form, I mapped facial expression data to parameters within the ‘twist’ and ‘noise’ nodes. This allows the visual to distort depending on emotional input. In this way, emotions began to “generate” their own symbols through procedural logic.

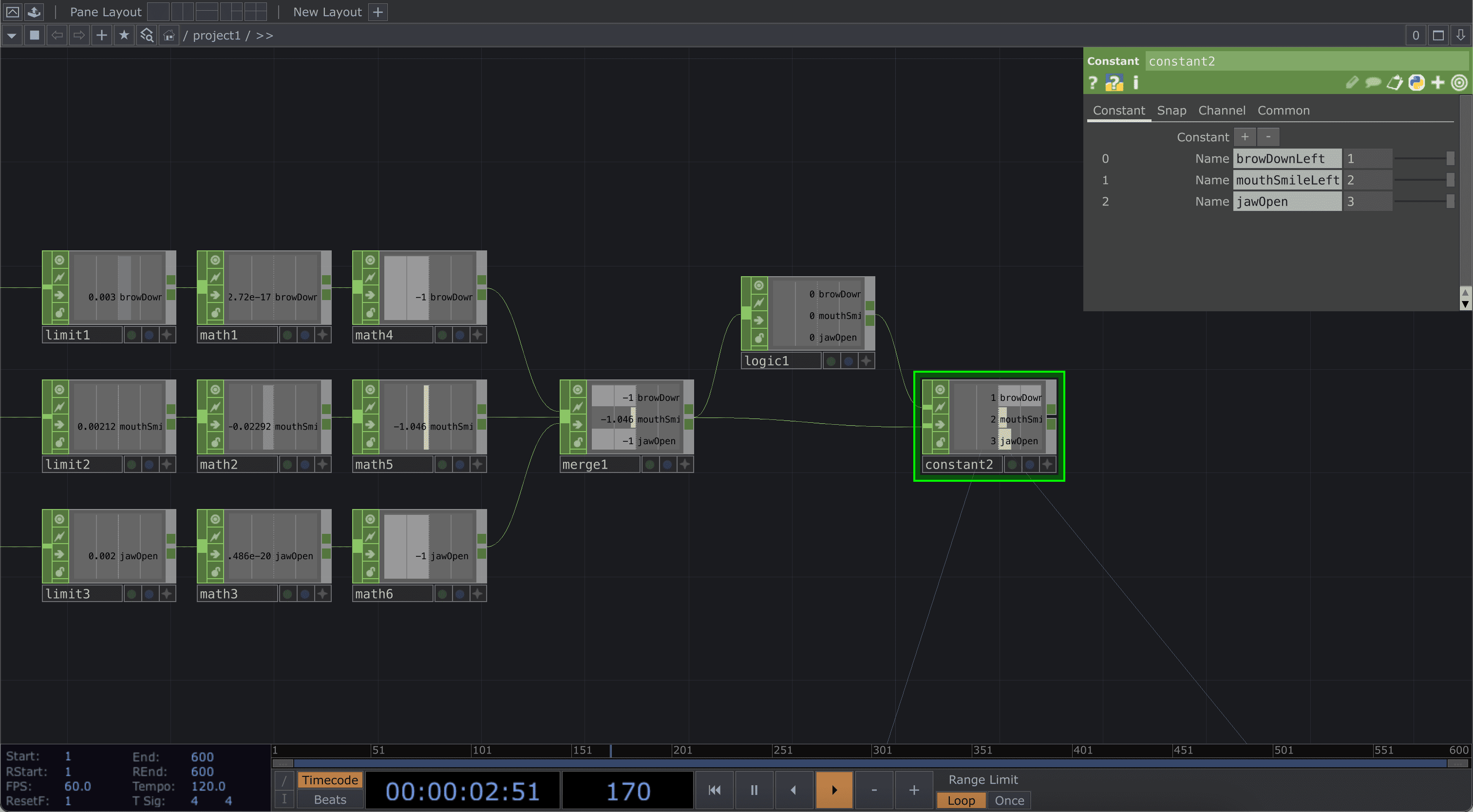

However, I faced several technical challenges. I used three facial expression inputs, which are ‘browDownLeft’, ‘mouthSmileLeft’ and ‘jawOpen’. My intention was to switch between three corresponding visuals using a ‘switch’ node. However, I discovered that the ‘switch' node would not move beyond ‘index2’, meaning the third expression could not be activated properly.

To resolve this, I reconfigured the data flow. First, I mapped each channel’s data range from 0-1 to 0.5-1 for better differentiation. I then merged the three channels into a single channel and passed it through a ‘logic’ node, set to ‘ON when greater than zero’. Using a ‘constant’ node, I classified each input as a specific index; ‘index1’, ‘index2’ and ‘index3’. Within the ‘switch’ node, this meant:

① ‘browDownLeft’ is active within range 1 - 2

② ‘mouthSmileLeft’ within range 2 - 3

③ ‘jawOpen’ within range 3 - 4

④ Neutral states remain within 0 - 1

In essence, when the ‘mouthSmileLeft’ channel outputs a value of ‘1’ in the ‘logic’ node, it switches on that input and shifts its state from ‘index2’ to ‘index3’ in the ‘constant’ node, allowing the corresponding visual to appear. Although it took multiple trials, I eventually succeeded in getting the ‘switch’ node to change visuals based on real-time facial expressions. This method is flexible, allowing me to easily expand the system with additional channels in future iterations.

Once the technical system was stable, I assigned each generated visual to one of Ekman’s Six Basic Emotions. Each symbol behaves as an abstract emotional signature, generated through code and shaped by affective data.

Reflecting on this process, I realised that this experiment deepened my understanding of how meaning can emerge from generative systems. While the outcome appears purely visual, its conceptual weight lies in how emotion becomes both the input and the author. Each symbol is not manually drawn but rather co-created between human expression and algorithmic logic. In a speculative sense, this experiment gestures towards a possible future where emotional communication evolves beyond language, where affect is not described but visualised and felt through generative semiotics.