MOVIE MARATHONS

Since my last discussion with Andreas, I have been struggling to generate compelling ‘what-if’ scenarios. To get unstuck, I decided to turn to science-fiction films as a way to observe how design fiction operates in cinematic contexts. This is not necessarily to look for direct references to emotion-to-visual systems, but to study how these narratives prompt reflection and speculation.

① Her by Spike Jonze, 2013 — The film follows a man who develops a romantic relationship with an artificial intelligence operating system (OS) that is personified through a female voice.

What did I like about this movie? — I was drawn to how the OS gradually develops emotional depth and relational intimacy. Beyond the storyline, what resonated with me was how the technology was embedded into everyday devices such as computers and mobile phones. This seamless integration made the speculative technology feel believable and intimate. It reminded me that design fiction is most effective when it does not appear futuristic for the sake of it, but when it quietly enters daily life. This makes us question how much we can emotionally rely on such systems.

② Uglies by McG, 2024 — Set in a dystopian future, the film portrays a society where individuals must undergo cosmetic surgery at the age of 16 to become “pretty” and gain social acceptance.

What did I like about this movie? — In one scene, the protagonist uses a mirror to visualise an enhanced version of herself. Similar to Her, the use of a familiar, everyday object appealed to me. It made me question how people might behave if such technology existed. Would it heighten insecurities rather than empower individuals? Would it push people toward physical alteration instead of self-acceptance? This reinforced my interest in mirrors and reflective interfaces as speculative tools.

③ Tron: Ares by Joachim Rønning, 2025 — The third installment in the Tron series follows an AI entity entering the real world.

What did I like about this movie? — I was fascinated by the scene where Ares scans Eve Kim’s face and interprets her expression as an “empathetic response”. The way facial data was visualised through pixelated, ASCII-like graphics and scanning overlays made emotional processing feel computational yet readable. These visual metaphors strongly influenced how I might represent emotional data visually in my own work.

There are still many films I plan to watch, but this process has already helped me realise that strong ‘what-if’ scenarios often emerge from subtle interventions into everyday life. I believe that once I settle on a single speculative framing, it will guide my next steps more clearly.

MAKING #01

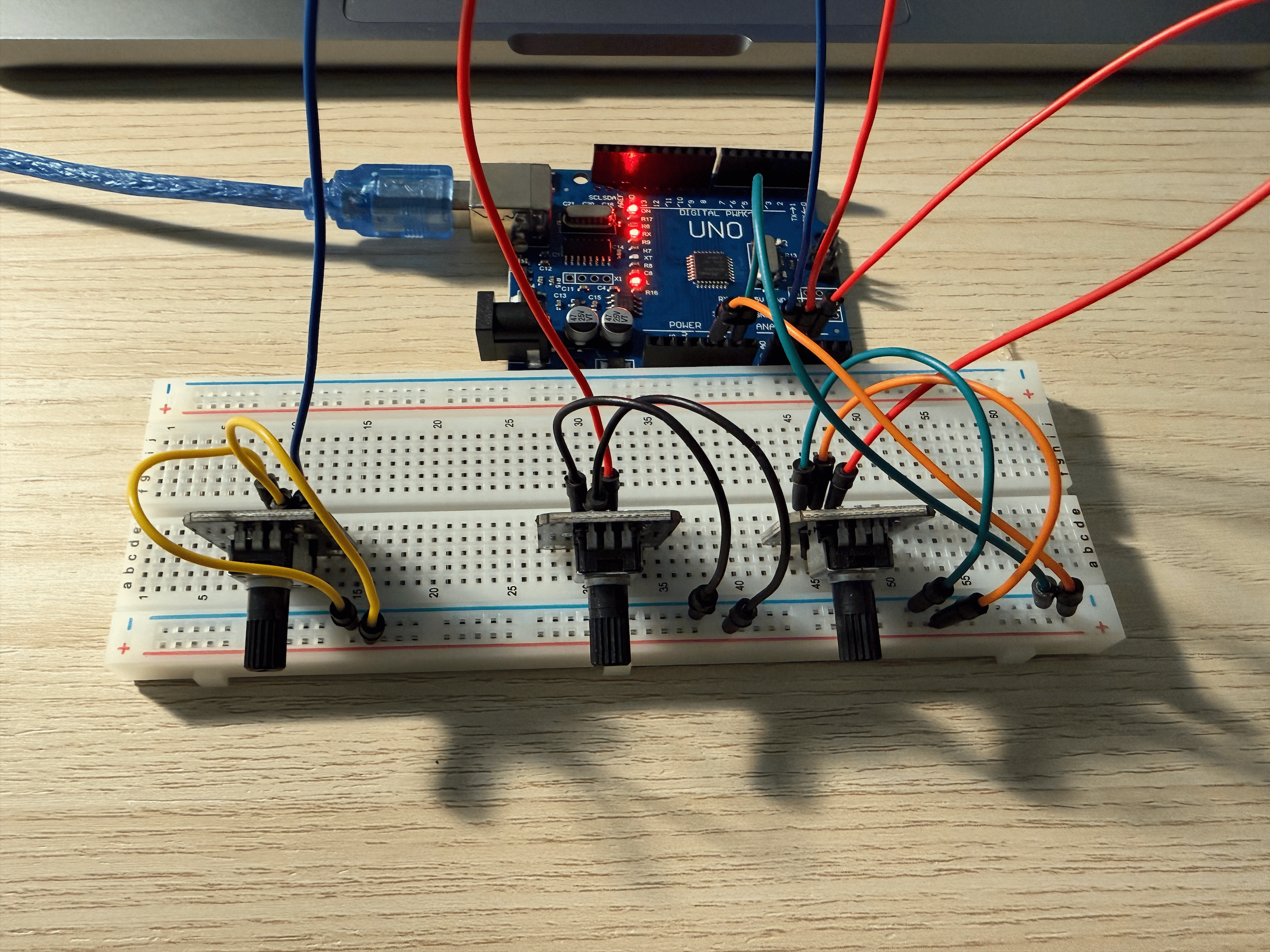

To regain momentum, I returned to the ‘Mood Mirror’ concept, but this time with a stronger focus on interaction. Based on Andreas’ dissertation feedback, I wanted to explore physical computing as a way for users to actively manipulate emotional visuals.

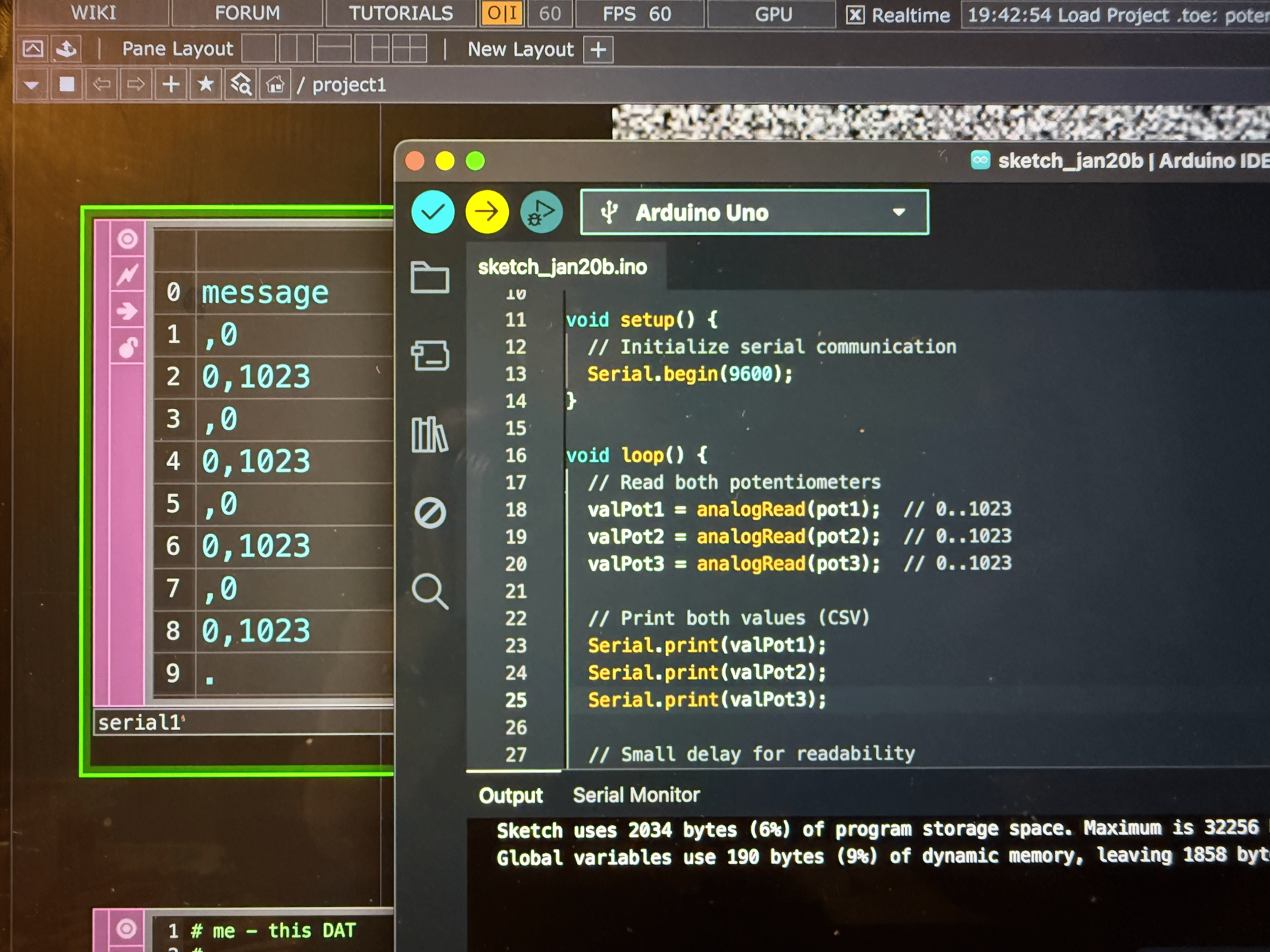

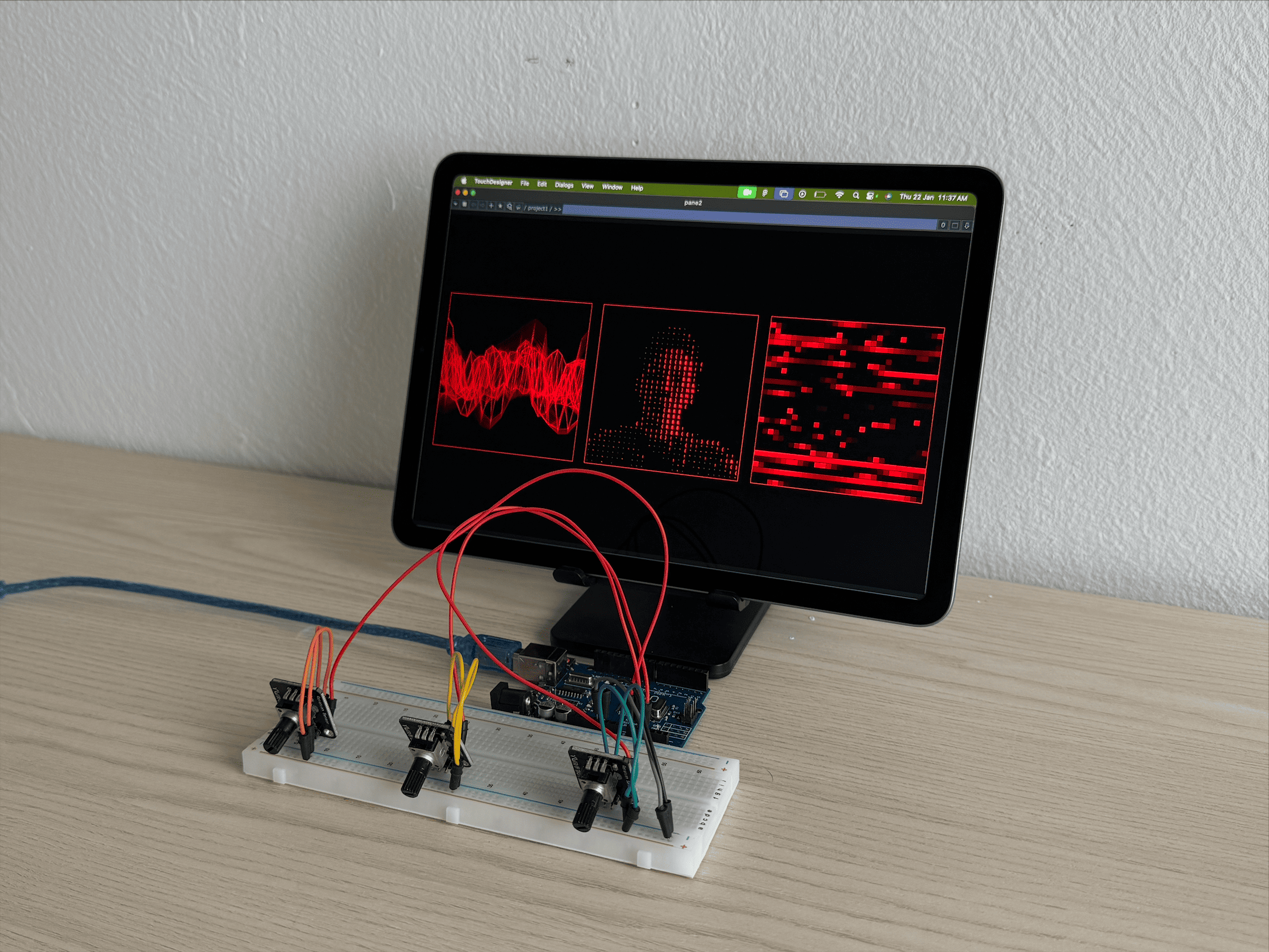

I purchased three potentiometers from Sim Lim Tower and wired them to an Arduino. While I managed to upload the code successfully, I ran into issues in TouchDesigner. I could not reliably separate the three potentiometer values into distinct channels without them interfering with one another. This made it difficult to map each input to specific visual parameters.

While troubleshooting, I realised how easily frustrated I became. Part of this came from the pressure of wanting the system to work quickly, but another part came from questioning whether building my own controller was even necessary. Would a ready-made controller such as a MIDI controller be more practical? This is something I plan to discuss further with Andreas.

Putting the controller aside for a moment, I shifted my focus to developing the visuals. This was unexpectedly challenging. This process took me a while because I was fickle-minded about the visuals representing emotions. I searched high and low for tutorials and yet not many were appropriate. Eventually, Isimplify my approach and draw inspiration from Tron: Ares (2025). Using pixels, ASCII characters and grid-based structures felt like an effective way to visualise emotions as processed data. They appeared minimal, legible and computational.

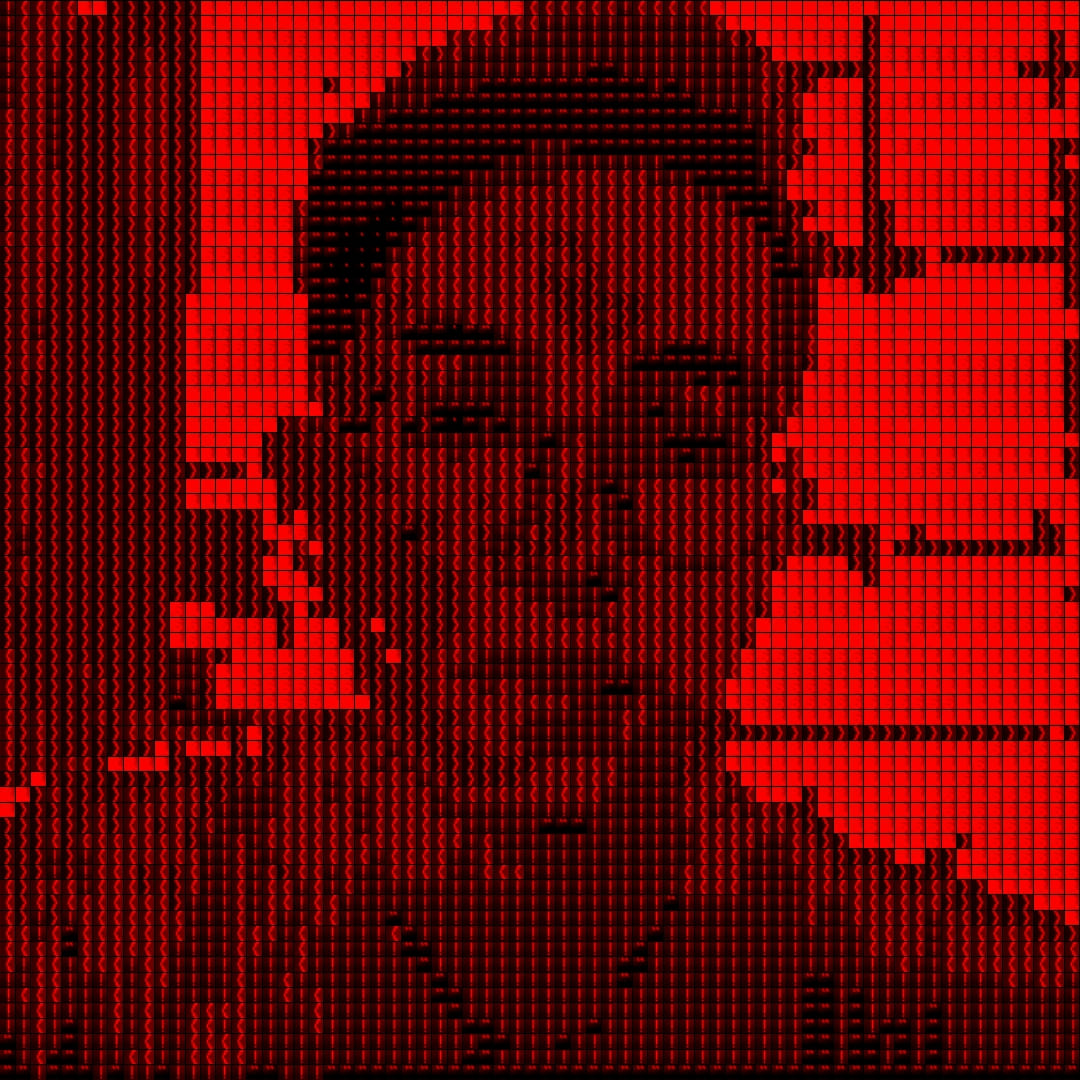

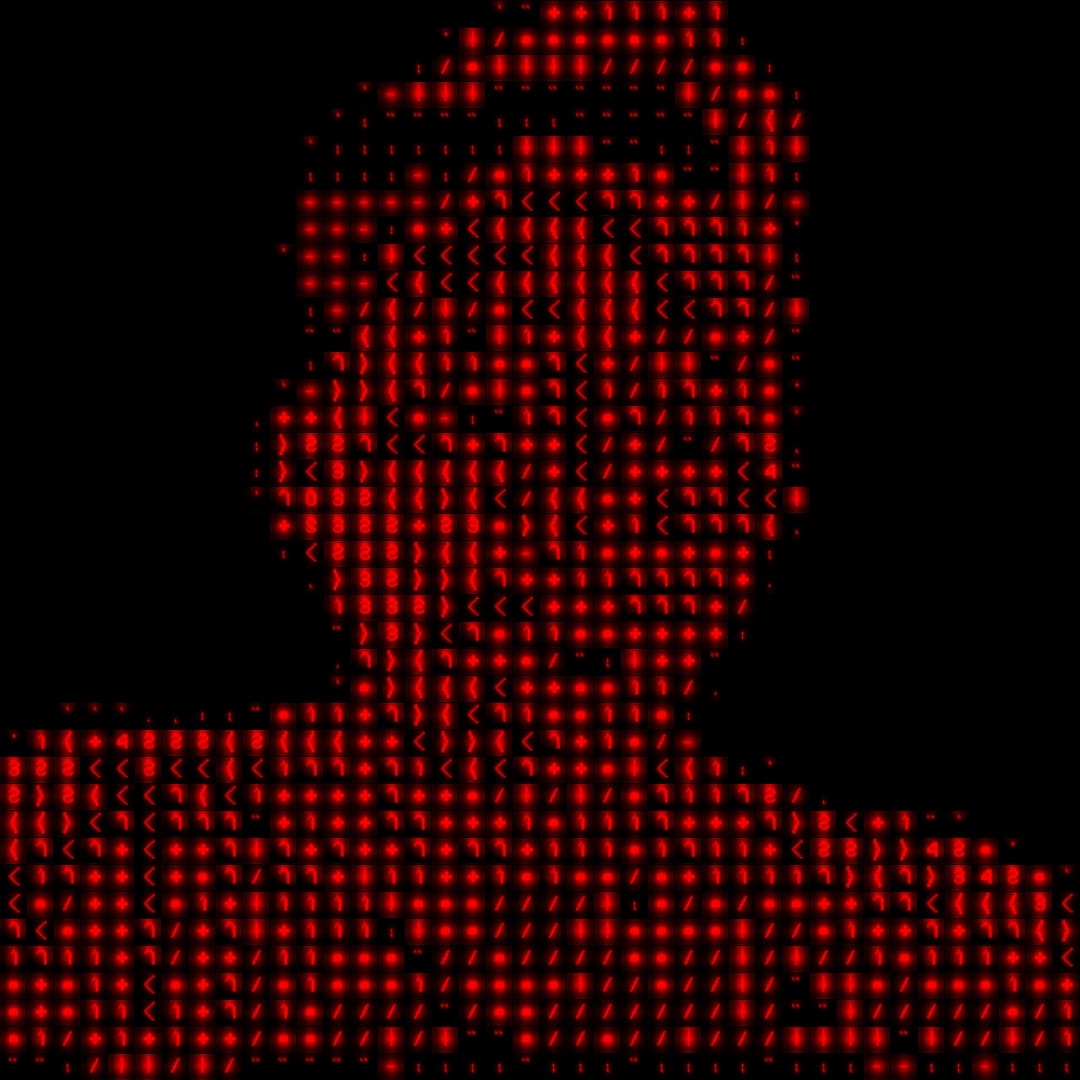

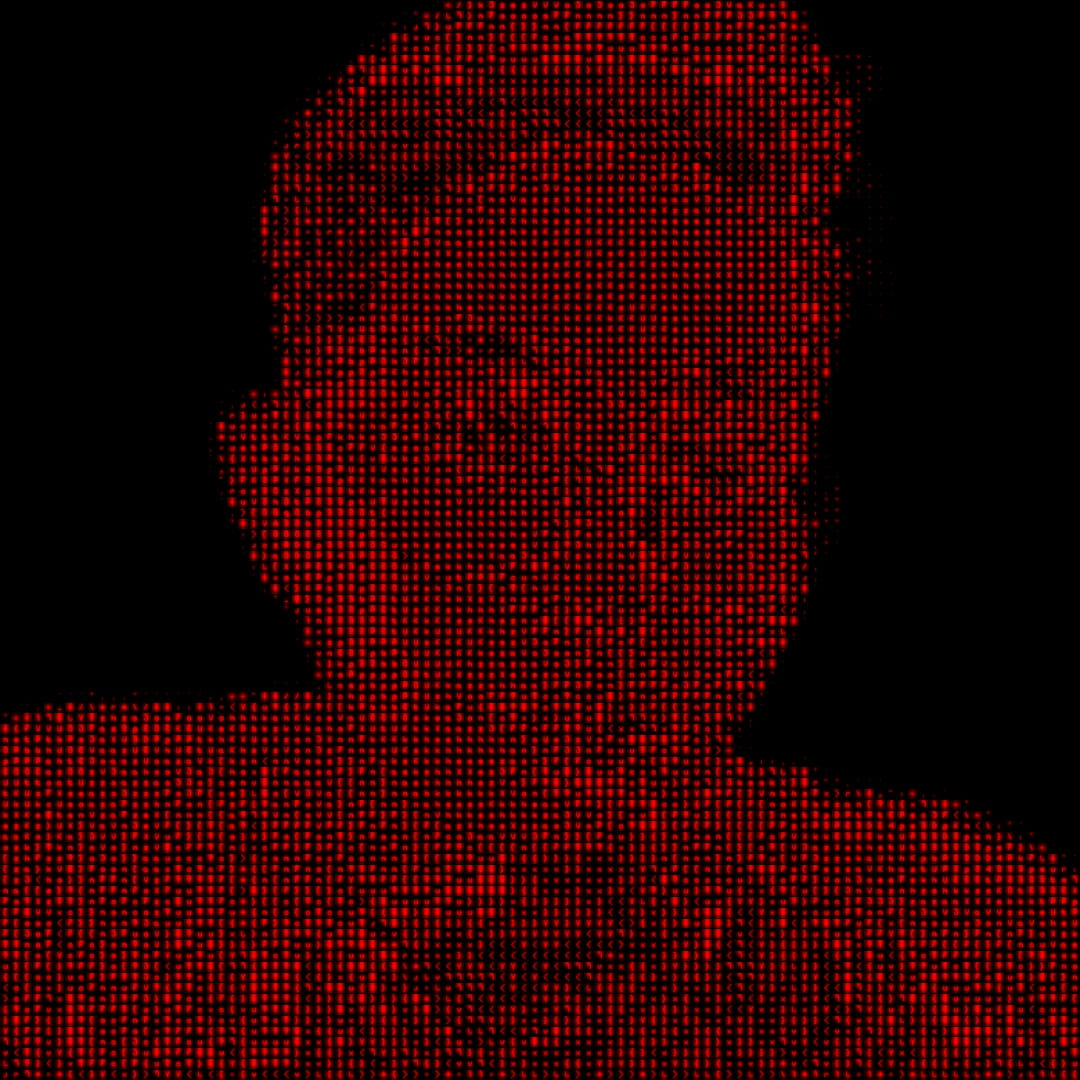

① ASCII Portrait — ASCII is a commonly used visual language for representing data through printable and non-printable characters. I am particularly drawn to this aesthetic because it clearly conveys the transformation of the physical world into processed data. The abstraction still retains traces of the human form, which reinforces the idea of perception being translated into computation. Moving forward, I am probably going to reuse this effect and explore it further.

![]()

![]()

![]()

![]()

② Pixel Board — Inspired by the work of visual artist Ryoji Ikeda, this visual converts noise output into discrete pixel blocks to achieve data-driven appearance. By adjusting the threshold, the density of black and white pixels shifts, altering the visual intensity. I see this as a potential representation of emotional arousal. The greater the number of active pixels, the stronger the emotional state being expressed.

③ Grid Waves — While this visual is less compelling to me compared to the previous two, it remains an interesting exploration of motion and feedback. The fluctuating waves, combined with the feedback loop, create a sense of continuous transformation, which still aligns with the theme of emotion as something dynamic and unstable.

CONSULTATION FEEDBACK

After reviewing this week’s progress, I admitted that I was not satisfied with the outcome and brought my concerns to Andreas.

① Physical Interaction — I shared that building my own controller felt cumbersome and that using a MIDI controller might be more efficient. Andreas suggested trying the ‘Korg Nanokontrol’, as it offers multiple sliders and buttons suitable for experimentation. While I was concerned about the aesthetic mismatch, he reassured that we could troubleshoot my custom controller together.

② Relating to Users — I also expressed concerns about whether users would understand or relate to the interactions and visuals. What if the visuals didn’t make sense? Andreas advised me to look at existing projects that visualise emotion and study how their interactions function. If those systems are effective, then clarity and relatability will follow naturally.

We also briefly discussed the final outcome and my direction post-graduation. Since my current approach positions the prototype as a speculative ‘asset’ rather than a solution, Andreas suggested that it could exist as part of a branded series of films. This direction excites me, as it aligns with my background in film and creative direction. His comment that the ‘Mood Mirror’ video was successful gave me renewed confidence in pursuing this approach.